Overview

- Researchers examined 13,065,081 electronic health record notes from 2016–2023 covering 1,537,587 patients and 12,027 clinicians at a large mid-Atlantic U.S. health system.

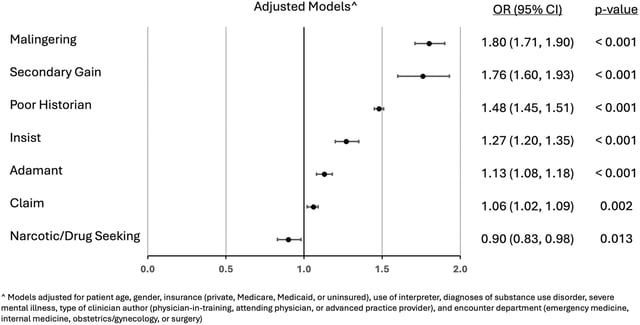

- Natural language processing flagged words and phrases suggesting clinicians doubted patient sincerity or competence, such as “claims,” “insists” or “poor historian.”

- Overall, fewer than 1% of notes contained credibility-undermining language, but notes on non-Hispanic Black patients had 29% higher adjusted odds of such language than those on white patients (aOR 1.29).

- Disparate odds varied by type of doubt: undermining sincerity (aOR 1.16) and competence (aOR 1.50), while supportive credibility language was 18% less likely in notes on Black patients (aOR 0.82).

- Study authors recommend integrating unconscious bias training into medical education and developing bias-aware AI tools to screen clinician notes, noting limits in single-system data and NLP accuracy.