Overview

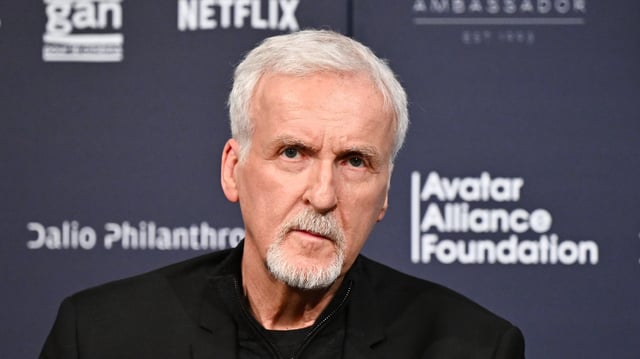

- Cameron cautions that linking AI to weapons systems, including nuclear defense counterstrikes, could unleash a real-world catastrophe reminiscent of his Terminator saga.

- He argues that the speed of AI-enabled combat operations may exceed human reaction times, necessitating superintelligent processing to manage critical decisions.

- Recalling past near-misses in nuclear incidents, he highlights human fallibility as a danger when oversight fails in automated defense networks.

- Cameron frames AI risks alongside climate change and nuclear arsenals as three converging existential threats at this pivotal juncture.

- While warning of AI’s military perils, he embraces generative tools as a Stability AI board member to halve visual-effects costs and is adapting Ghosts of Hiroshima for the big screen.